Security teams often struggle because of noise, context gaps, and the slow drift between what they see internally and what is happening outside their estate. Integrating open-source threat intelligence with Elastic SIEM often starts as an efficiency project. In practice, it becomes a question of discipline.

Open-source intelligence feeds are widely available. Many are free. Some are community maintained. A few are exceptionally good. The difficulty is not access. It is making that intelligence usable inside Elastic without overwhelming analysts or corrupting detection logic.

Elastic SIEM is flexible. That flexibility is both strength and risk. Poor integration choices linger for years.

Why Open-Source Intelligence Matters in Elastic Environments

Elastic ingests logs well. Endpoint telemetry, authentication records, firewall events, cloud audit trails. The platform can correlate across them effectively. What it does not natively know is whether a remote IP has been associated with a recent phishing kit, or whether a file hash appeared in a ransomware campaign last week.

Open-source feeds fill that gap.

They add context to otherwise routine events. A failed login from an unfamiliar address might remain low priority. The same address tied to active command and control infrastructure changes the response posture immediately.

However, not all feeds are equal. Some provide stale indicators. Others recycle data from paid services. Some lack curation entirely. Blindly feeding them into Elastic creates alert fatigue at scale.

A team that integrates open-source intelligence without validation often ends up distrusting its own alerts within months.

Choosing the Right Intelligence Sources

Before touching Elastic configuration, the selection process deserves attention.

Some feeds focus on IP reputation. Others specialise in malware hashes, phishing domains, or botnet tracking. A financial services organisation may value credential stuffing intelligence more than industrial control system indicators. Context matters.

Reputation based lists are often the easiest to consume. They are also the noisiest. Attack infrastructure changes rapidly. An IP listed yesterday might be reassigned today. Without expiry controls, Elastic will continue flagging irrelevant traffic.

Threat intelligence that includes metadata is more useful. Confidence scores, first seen timestamps, associated threat actor information. These elements allow analysts to weigh risk rather than react automatically.

It is tempting to subscribe to everything. Mature teams usually reduce rather than expand feed volume over time.

Technical Integration with Elastic SIEM

Elastic supports threat intelligence ingestion through several mechanisms. Filebeat modules, the Threat Intelligence framework, custom pipelines, and API driven imports. The method matters less than the discipline behind it.

Indicators need normalisation. IP addresses, domains, hashes, URLs. Consistent field mapping is essential. If an IP from a feed lands in a custom field rather than the expected ECS field, correlation rules may never trigger.

Performance also becomes a concern. Large indicator sets slow queries. Index design must reflect how frequently data will be queried and how long it remains relevant.

Teams often underestimate storage implications. Retaining six months of short-lived indicators offers little security value but increases index size significantly.

Some organisations choose to maintain a dedicated threat intelligence index with strict lifecycle policies. Indicators expire automatically after defined periods. Others tag feeds with confidence levels and use that metadata within detection rules.

Neither approach is universally correct. What matters is intent.

Designing Correlation Logic That Reflects Reality

There is a common misconception that integrating open-source threat intelligence with Elastic SIEM automatically improves detection. It does not. It increases detection surface area. Whether that results in better security depends on rule design.

Correlation should consider:

- Confidence level of the indicator

- Recency of the intelligence

- Asset criticality

- User behaviour context

An outbound connection to a flagged IP from a development workstation differs from the same connection originating from a domain controller.

Elastic allows this contextual layering, but it requires deliberate engineering. Simple match rules tend to generate noise. Context enriched rules reduce unnecessary alerts but require more effort upfront.

Some teams phase their integration. Indicators are ingested first but used only for enrichment in dashboards. Alerting is introduced later, once patterns are understood.

That slower approach often prevents operational fatigue.

How Intelligence Flows in Practice

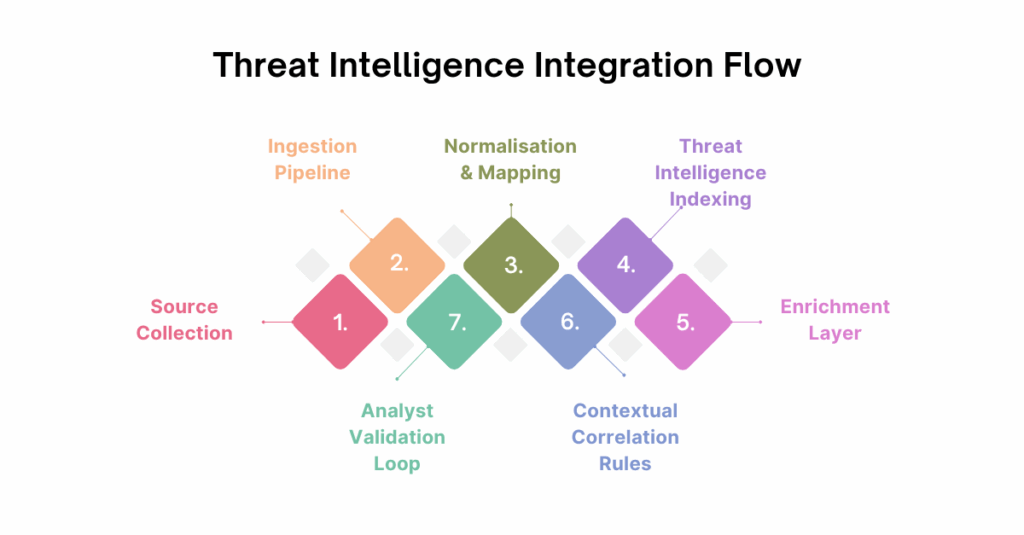

It helps to visualise how intelligence flows from external sources into detection and response. The following structure works well for diagramming and internal design discussions.

Below is a simplified integration flow that many teams adopt:

1. Source Collection

Curated open-source feeds are selected and periodically reviewed.

2. Ingestion Pipeline

Indicators are fetched through API or file download and parsed into structured fields.

3. Normalisation and Mapping

Data is aligned to Elastic Common Schema fields for compatibility.

4. Threat Intelligence Indexing

Indicators are stored in a dedicated index with lifecycle and expiry policies.

5. Enrichment Layer

Incoming logs are automatically enriched at ingest or query time.

6. Contextual Correlation Rules

Detection logic references both internal telemetry and external intelligence.

7. Analyst Validation Loop

False positives are reviewed, and feed quality is reassessed regularly.

Each stage influences the next. Skipping governance at stage one tends to create friction at stage seven.

This is rarely captured in project plans but it should be.

Operational Friction and Analyst Trust

A security operations centre lives on trust. If analysts begin dismissing alerts as irrelevant, integration efforts lose value quickly.

Open-source indicators often lack clarity around confidence. A domain might be listed due to shared hosting abuse. Blocking or alerting on every match creates unnecessary escalation.

Over time, experienced teams refine their use of intelligence. Low confidence indicators may be used only to raise priority rather than trigger alerts. High confidence indicators might initiate automated containment in specific scenarios.

Elastic supports flexible rule tuning. The platform does not solve judgement errors.

Regular feed review sessions are often neglected. Intelligence sources should be evaluated quarterly. Indicators that consistently generate noise should be retired. This discipline separates effective integration from decorative implementation.

Governance and Risk Considerations

Open-source intelligence introduces its own risk profile. Feeds may contain inaccurate data. Some may be compromised. Supply chain thinking applies here as much as it does to software dependencies.

Organisations operating in regulated sectors should consider documentation. Where does the intelligence originate. How often is it updated. What is the confidence model.

Auditors increasingly ask how detection logic is maintained. A vague answer about community feeds is rarely sufficient.

Elastic provides audit capabilities around rule changes and data ingestion. These logs are useful not only for security but for demonstrating control maturity.

Measuring Value Without Inflating Metrics

It is tempting to measure success through the number of matches against threat feeds. This is misleading.

A large number of matches may indicate excessive noise. A low number may indicate effective prevention controls or poor feed relevance.

More meaningful metrics include:

- Reduction in time to triage high confidence alerts

- Improved contextual clarity during investigations

- Decrease in analyst escalations due to uncertainty

These outcomes are harder to quantify but closer to operational reality.

Integrating open-source intelligence is not about proving volume. It is about improving decision quality.

When to Reassess the Integration Strategy

Threat landscapes evolve. Infrastructure hosting providers change policies. Attackers adapt.

A feed that was highly valuable two years ago may now add little value. Elastic environments also change. New data sources, new cloud workloads, new identity providers.

Periodic reassessment prevents drift. Integration should not remain static simply because it works technically.

Teams that revisit their intelligence model annually tend to maintain higher signal quality. Those that do not often accumulate technical debt quietly.

Conclusion

Integrating open-source threat intelligence with Elastic SIEM can strengthen detection and investigative depth, but only when approached with restraint and structure. The technology itself is not the challenge. The challenge lies in feed selection, lifecycle management, contextual rule design, and ongoing validation.

Poorly governed intelligence creates noise and weakens analyst confidence. Carefully integrated intelligence sharpens visibility and improves response decisions without overwhelming operations.

CyberNX can provide you with the much-needed expert insights when you are integrating open-source threat intelligence with Elastic SIEM. They are a reliable elastic stack services partner whose services can help you maximize your investments and implement Elastic SIEM according to best practices on elastic cloud, on-premises, or public cloud to meet your business requirements.

Security platforms evolve. Intelligence sources shift. What remains constant is the need for disciplined integration rather than accumulation.

The post Integrating Open-Source Threat Intelligence with Elastic SIEM: Practical Realities from the Field appeared first on Trade Brains Features.